A line in the file consisted of an object identifier and its payments for decades. The ETL project task was to create a payment projection for a period of 70 to 100 years. This file type was the largest in the project. In all, about 184 million rows had to be processed. This file had 220,000 rows, each of which had 840 delimited values and it had to be turned into 70 million rows for a target table. My task was to load data from a large comma-delimited file.

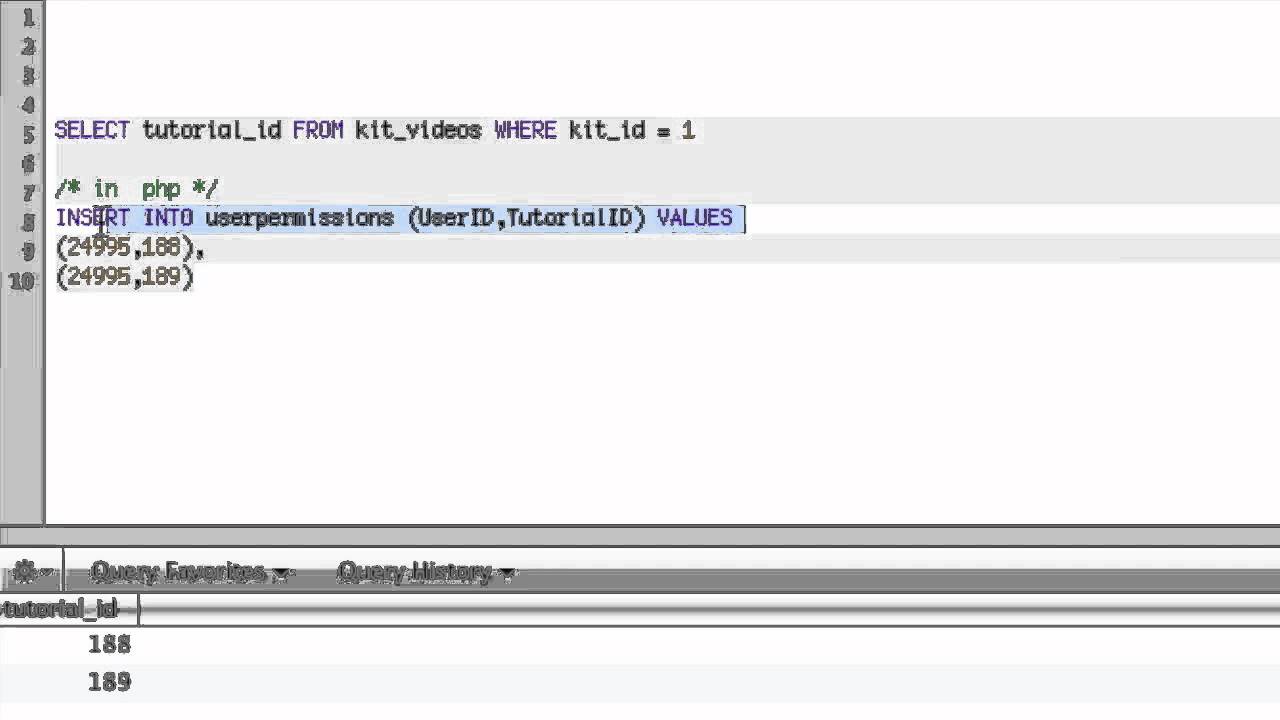

Last year, I participated in an Extract, Transform, Load (ETL) project. The more data you’re dealing with, the more important it is to find the quickest way to import large quantities of data. In this article I’ll describe how I experimented and adjusted my methods to find the best way to insert a million of rows of data into SQL Server. What’s the best way to use statements that insert several rows at a time, and how many rows should I try to pass at a time? For performance, should they be wrapped in explicit transactions or should I use auto-commit? How do I pass the parameters to the stored procedure? Which is the best, and fastest, approach? I had to address some questions to make sure the experiments would produce the quickest times. I wanted to find the best way to import data into SQL Server, so I decided to experiment with a couple of different data load methods to compare the results. From that stored procedure, you’ll probably want to insert the data into a table by using statements that insert several rows at a time. You can use bulk copy (bcp), insert the data with a series of INSERT statements, use a parameterized prepared statement from the client, or call a stored procedure with the data passed as a parameter. When faced with having to import data directly into SQL Server from a client application, you have a number of choices.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed